7 Cyberlearning Technologies Transforming Education

Posted: Updated:

Progenitor X is based on research being conducted at the Morgridge Institute for Research and the Wisconsin School of Medicine and Public Health.

By exploring data gleaned from users playing the game, they have identified patterns in play within and across players (using data mining and learning analytic techniques) and have developed statistical methods for testing hypotheses related to content models.

"These projects are not only using technologies that only recently became possible. They also build on decades of excellent research on how people learn," said Chris Hoadley, the program officer at NSF who leads the Cyberlearning program. "I believe it's only by advancing technology design and learning research together that we'll be able to imagine the future of learning."

[In case you think all of Squire's work involves a gloomy outlook, he's also taken up the Dalai Lama's challenge to developers to create a video game that promotes mindfulness. His group's effort, Tenacity, is currently under development.]

3. Teaching Tykes to Program: Never Too Young to Control a Robot

Playgrounds are popular spaces for young children to play and learn. They promote exploration of the physical environment and the development of motor and social skills, allowing young children to be autonomous while developing core competencies.

Playpens, by contrast, corral children into safe, confined spaces. Although they are mostly risk-free, there is little opportunity for exploration and imaginative play.

From a developmental perspective, the playground promotes a sense of mastery, creativity, self-confidence, social awareness and open exploration, while the playpen hinders the development of these traits.

"We're trying to develop technologies to get us as close as we can to the metaphor of the playground," said Marina Umaschi Bers, professor of Computer Science and Child Development at Tufts University, director of the DevTech research group and author of Designing Digital Experiences for Positive Youth Development: From Playpen to Playground.

Dancing with KIWI robots. Courtesy of the DevTech Research Group at Tufts University.

Many are familiar with Bers through her work on ScratchJr. - a programming language where even students who are too young to read and write can put together actions in a sequence to create interactive stories, games and animations.

Last June, with NSF support, Bers and her colleagues released ScratchJr. as a free app for children 5 to 7. (A Kickstarter campaign in May raised $75,000 to adapt the app for Android and iPad.) As of February 2015, ScratchJr. had more than 500,000 downloads world-wide.

Speaking at NSF, Bers described her latest project, the KIWI robotic kit (subsequently renamed KIBO), which teaches programming through robotics, without screens, tablets, or keyboards.

Using KIBO, students scan wooden blocks to give robots simple commands, in the process learning sequencing, one of the most important skills for early age groups. By combining a series of commands, kids make the robot move, dance, sing, sense the environment or light up.

With a Small Business Innovation Research grant from NSF, Bers started KinderLab Robotics and, using insights from her 15 years of research, transformed the KIWI prototype into a widely-available cyberlearning toy that could impact a large number of kids.

The Confused Robot. KIWI was prototyped by the DevTech research group through a grant from the National Science Foundation.

KIBO shipped its first several hundred units in 2014, but, according to Bers, building and selling KIBO was only a small part of the challenge.

"We don't want to give away the technology and wash our hands of it," Bers said. "We're training the whole individual."

For that reason, KIBO comes with a curriculum, lesson plans, badges, design journals and even teacher and parent training.

Both the KIBO robotic kit and the ScratchJr programming language have left the academic ivory tower and are now available to the wider public.

"When we teach children how to read and write, we don't expect everyone to become a journalist or a novelist," Bers said, speaking about KIBO in the New York Times. "But we believe they'll be able to think in new ways because it opens the doors to thinking. We believe the same thing for the skills of programming and engineering."

4. Virtual role-play and robo-tutors

Communication skills are critical in a global economy. Since communication often occurs across cultures, people must know more than just the vocabulary and grammar of a foreign language. They must also have cultural awareness - a recognition of the "dos and don'ts" that each culture maintains - in order to communicate smoothly, effortlessly and with confidence.

Communication skills are critical in a global economy. Since communication often occurs across cultures, people must know more than just the vocabulary and grammar of a foreign language. They must also have cultural awareness - a recognition of the "dos and don'ts" that each culture maintains - in order to communicate smoothly, effortlessly and with confidence.

Virtual role-play - where learners engage in simulated encounters with artificially intelligent agents that behave and respond in a culturally accurate manner - has been shown to be effective at teaching cross-cultural communication.

"You learn by playing a role in a simulation of some real life situation," said W. Lewis Johnson, a former professor at the University of Southern California and co-founder of Alelo Inc., a company that makes cyberlearning tools. "You practice communication with some artificial intelligent interactive characters that will listen and respond to you, depending on what you say and do. It helps develop fluency but it also helps to develop confidence."

A still from a cross-cultural, virtual role-playing scenario developed by Alelo. Video courtesy of Alelo, Inc.

With an award from NSF, W. Lewis Johnson and his team at Alelo (a Hawaiian word that means "language" or "tongue") developed a range of products to teach cross-cultural communication using virtual worlds. Alelo's tools have already achieved success in military training and English-as-a-second-language programs and are now being applied to a wide range of learning applications.

"We found in our military training that when soldiers use this approach, once they get into a foreign country -- let's say they're sitting down with local leaders -- they feel as if it's a familiar situation to them, even though they've never experienced it in real life before," Johnson said. "That degree of comfort is extremely powerful."

As an example, Johnson showed a role-playing scenario that orients officers to the etiquette expected at a Chinese banquet. (The goal: Don't get drunk!)

"How do you show proper respect for your host in participating in these toasts but not fall down drunk in the process?" Johnson asked. "That's an effective communication problem. Role-playing teaches some of the communication skills that you could use if you find yourself in this situation."

Importantly, Alelo's virtual role-playing tool gives one the ability to practice conversation and etiquette until you get them right.

A demonstration of the "Banquet" scenario from the Virtual Cultural Awareness Trainer course, developed for Joint Knowledge Online by Alelo Inc. Video courtesy of Alelo, Inc.

To develop a version to teach English as a second language (ESL) to those in the U.S., the researchers interviewed immigrants to determine what cultural issues they found most problematic. The instruction they created -- available in multiple languages -- explains how to handle different situations that one might face as a new immigrant in the United States and provides tips on culturally appropriate behavior.

Students can practice on a range of devices, allowing them to use the program in preparation for classroom activities, as follow-up to classroom activities or as needed in the field. The ESL program even offers a virtual coach who provides help and feedback.

In 2012, Alelo partnered with the Commonwealth of Virginia to develop and test virtual role-play learning products for conversational practice in Chinese. Tammy McGraw, the former director of digital innovations and outreach at the Virginia Department of Education, had met Johnson at a Wired conference years earlier and, although Alelo was demonstrating cyberlearning technologies for soldiers, McGraw was struck by how the virtual characters were able to impart both linguistic and cultural lessons.

Moreover, the software could address the shortage of qualified teachers able to teach Chinese, Arabic and other less popular languages, while allowing the state to increase the number of students who were able to take classes that were over-enrolled.

The first Web-based course was tested last year via Virtual Virginia, a statewide virtual school program, and received overwhelmingly positive ratings from the students.

"The virtual classes enabled students to be more self-directed than teachers could normally support," McGraw said.

Recently, McGraw and Johnson have been adapting the insights learned in virtual role-playing research for a new platform: a robo-tutor.

With support from NSF, Alelo has taken RoboKind's lifelike social robot and equipped it with the ability to converse in Chinese. The technology gives learners abundant opportunities to practice their conversational skills and lowers the entry barrier for difficult languages such as Chinese.

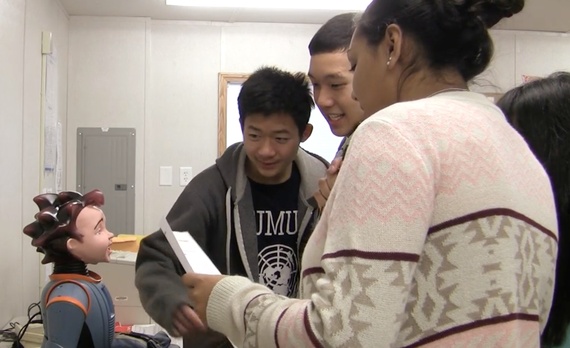

With the RALL-E project, Alelo is developing a prototype lifelike robot that engages in conversations in foreign language, then studying its use in educational settings. Courtesy of Alelo, Inc.

"It's non-threatening," McGraw said. "The robot is not going to be offended if you say the wrong thing. He's not going to laugh at you if you pronounce Chinese incorrectly. But he also has the ability to engage learners through active interaction."

Though still in the prototype phase, they imagine RoboKind could be used in the classroom, with kids coming up to engage with it at will. It could also be used outside of formal class as a personal tutor. Teachers at Thomas Jefferson High School of Science and Technology in Alexandria, Virginia signed on to try out the computer-based pilot.

Students interact with the Rall-E prototype language tutor robot. Courtesy of Alelo, Inc.

By combining artificial intelligence and robotics with insights from virtual role-playing research, Alelo is pioneering new avenues of personalized education.

"The question we're trying to answer is: what are the aspects of the technology that are most important for mastering communicative skills?" McGraw said. "We have two different realizations of virtual role-play technology. They have different strengths, different weaknesses. What are they are going to be good for? Which is good for what? Doing this research, we hope to get some answers to those questions."

5. Tools for Real-time Visual Collaboration

Increasingly, professionals in all sorts of fields need to work together to rapidly design new and complex solutions to pressing problems. Often, these teams are geographically or temporally distributed.

"We've had great tools in the past to collaborate on texts, but we haven't had great tools for us to collaborate visually," said Kylie Peppler, assistant professor of learning sciences at Indiana University. "As a result, much of today's innovative design work still occurs through pen-and-paper sketching, which is challenging to share and systematically build upon."

"We've had great tools in the past to collaborate on texts, but we haven't had great tools for us to collaborate visually," said Kylie Peppler, assistant professor of learning sciences at Indiana University. "As a result, much of today's innovative design work still occurs through pen-and-paper sketching, which is challenging to share and systematically build upon."

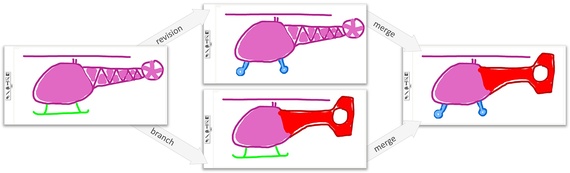

Two parallel paths of concept sketches of a toy helicopter in skWiki. skWiki supports multiple co-existing revision histories for the same multimedia object.

In their presentation at NSF, Peppler and Karthik Ramani, a Professor of Mechanical Engineering at Purdue University, described two new interactive collaboration tools they've designed -- skWiki (or Sketch Wiki, pronounced 'squeaky') and Juxtapoze -- that significantly improve creative collaboration in the visual design process.

For the last 18 years, Ramani has taught a class on toy design at Purdue. The university purchased its first 3d printers in 1995 and had consistently been on the technological cutting edge. But digital collaboration among students had remained a challenge.

"Computers have been individualistic devices," said Ramani. "But a new class of technology is emerging that allows us to engage in our individual learning process and also in a collaborative process."

SkWiki is an example of such a technology. It allows many participants to collaboratively create digital multimedia projects on the web using many kinds of media types, including text, hand-drawn sketches, and photographs.

An overview of skWiki. Courtesy of C Design Lab.

Whereas collaborative writing tools like Wikipedia and Google Docs don't allow for divergent thinking, SkWiki does, the researchers say. Individuals can contribute elements to others' designs or clone components from your peers' work and integrate them into your own.

They released the tool to the public in March 2014 and presented the project at 2014 ACM conference on Human Factors in Computing Systems last June.

A second tool they developed, Juxtapoze, lets students draw shapes that the program matches to existing web images, leading to serendipitous discoveries and open-ended creativity.

"Juxtapoze lowers the barriers to entry for people to make creative things in a very fluid manner," said Peppler.

A plug in for skWiki (the first of many, according to the researchers), Juxtapoze quickly matches scribbles generated by the designer with images found on the Internet, leading to serendipitous connections: for instance, a circle may become a wheel, or a squiggly line, a snake.

With Juxtapoze, "technology starts to be a partner in the design process and gets students to think in more divergent ways," she said.

Ramani and Peppler believe the two technologies have the potential to transform the design process into a more collaborative, creative and efficient endeavor.

An overview of Juxtapoze. Courtesy of C Design Lab.

"We've created a platform that can change things in a strategic way to maximize learning," said Ramani. "This new class of tools allows us to have better fluidity between our ideas and our design and lets students accomplish complicated engineering concepts in a short time scale."

Beyond enabling collaboration, SkWiki and Juxtapoze have the capacity to generate useful data about the creative design process itself, a potentially rich source for research into the impact of technology and collaboration on learning processes.

"Process is more important than final product, but it's often not treated that way," Peppler said. "These tools capture and privilege the process."

6. Fusing data collection, computational modeling and data analysis

Simulation and modeling have come to be regarded as the "third pillar" of science, but they haven't yet earned a permanent place in the classroom. Chad Dorsey, of the Concord Consortium, and Uri Wilensky, of Northwestern University, are working to change that.

Simulation and modeling have come to be regarded as the "third pillar" of science, but they haven't yet earned a permanent place in the classroom. Chad Dorsey, of the Concord Consortium, and Uri Wilensky, of Northwestern University, are working to change that.

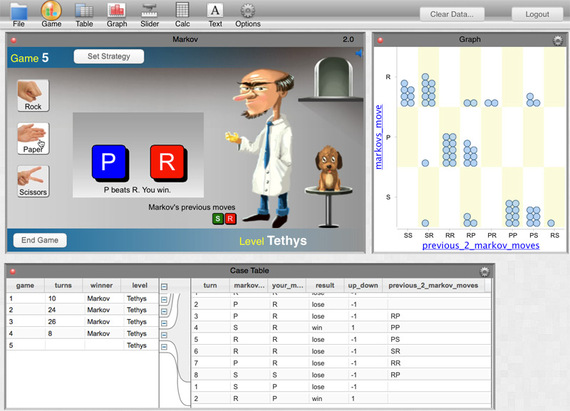

Dorsey, Wilensky and colleagues won the first cyberlearning integration and deployment grant from NSF in 2012 for their work to integrate their modeling environments with statistical software, called CODAP (Common Online Data Analysis Platform), developed by William Finzer, now at Concord Consortium.

CODAP builds on the NSF-funded Data Games project. In the example seen here, you learn to read the conditional probability graph to beat Dr. Markov and save Madeline the dog.

The project aims to bring together a wide variety of important educational technology innovations into a seamless whole, enabling students to engage in extended scientific inquiry more fluidly than is currently possible.

Through the project's work, students can gather data from a classroom experiment using a probe or sensor or examine the activity of a model or simulation, then bring the data from both into a unique data exploration environment where they can quickly make sense of it and adjust their findings for further experimentation.

One of the important technologies incorporated as part of the project is NetLogo, an agent-based modeling environment. Agent-based modeling environments simulate the simultaneous actions of large numbers of actors in an attempt to re-create and predict the formation of complex phenomena. Such environments can grow complex, emergent behaviors.

One of the important technologies incorporated as part of the project is NetLogo, an agent-based modeling environment. Agent-based modeling environments simulate the simultaneous actions of large numbers of actors in an attempt to re-create and predict the formation of complex phenomena. Such environments can grow complex, emergent behaviors.

NetLogo was developed by Wilensky, professor of Learning Sciences, Computer Science and Complex Systems at Northwestern University and director of the Center for Connected Learning and Computer-Based Modeling.

Wilensky has been working on agent-based modeling environments for more than twenty-five years. Much of the world's phenomena in both the natural and social world can be modeled in this way, including the workings of the immune system, molecular interactions in materials, ecosystems, economic activity and climate change.

Wilensky has long advocated for a universal literacy in modeling and widespread use of modeling environments in STEM classrooms. Dozens of Wilensky's research publications have demonstrated students' increased understanding of science when learning through computational modeling.

"Modeling has become a central part of science. But our classrooms don't look at it that way," Wilensky said.

A product of his research is the NetLogo agent-based modeling environment. NetLogo was designed with the principle of "low threshold, high ceiling" and has been used in thousands of schools and been employed in thousands of research papers. It stands as a model for how cyberlearning systems are designed, researched and implemented on a large scale.

Within NetLogo is a large library of sample models where simulation and modeling help reveal the underlying dynamics of the system being studied. For instance, in a forest fire demonstration, students simulate how fires spread under various conditions to find the tipping point at which a small fire becomes much more damaging. Other sample simulations model the dynamics of predator/prey interactions in an ecosystem (below).

An example simulation from NetLogo, here modeling the dynamics of predictor/prey interactions in an ecosystem. Courtesy of Netlogo.

In addition to NetLogo, the project integrates Concord Consortium's Molecular Workbench software that enables students to simulate a wide variety of physics and chemistry phenomena, including Newtonian mechanics, fluid dynamics, electromagnetism, states of matter and chemical bonds.

This environment is one of many developed by the Concord Consortium, a nonprofit organization in Concord, MA and Emeryville, CA that has been developing educational technology for over two decades.

Along with the Molecular Workbench, the Concord Consortium has developed a range of modeling and simulation technologies, from virtual laboratories, where students breed dragons to learn genetics, to 3D modeling environments that help students learn the engineering design process.

Their work also introduced the concept of probeware -- computer interfaces that enable students to collect data from classroom experiments easily in digital form.

All of these are critical technologies for helping students learn, described Dorsey, President and CEO of the Concord Consortium.

"Educational technology can make the invisible visible and explorable and enable students to think like a scientist," Dorsey said.

InquirySpace, the integration of all of these projects, brings sensors and probes together with computer-based models to create even richer cyberlearning experiences.

InquirySpace teachers and their high school students put aside traditional teaching and learning methods in favor of extended inquiry. Students design their own experiments, collect large data sets and easily perform complex analysis -- just like real scientists. Courtest of the Concord Consortium.

By embedding simulations and sensor data collectors in the classroom, Dorsey and Wilensky are able to aid students in gathering, visualizing and analyzing data from multiple sources.

A principal innovation of InquirySpace is the integration of the Concord Consortium's CODAP data exploration software with the modeling environments. This integration enables students to plan extended investigations of models, to do sophisticated analyses of the model runs, and to engage in arguments from evidence.

CODAP's unique drag-and-drop features lets learners examine data from model runs across its graphical visualization environment. This permits students to examine their experimental data more completely, seeing all of their experimental runs as individual data points and more easily identifying places where additional data need to be collected or where errors have occurred.

Explore air resistance with a simulation of a parachute jumper.

All of the components of the InquirySpace environment are web-based and widely shareable, enabling educators to customize the activities for their own contexts.

The project is unique in its assessments as well as its activities. In lieu of written reports, students record screenshots with voice descriptions of their experimental results, which the researchers found better uncovers student thinking.

The researchers piloted InquirySpace in high school classrooms in Massachusetts and in Northwestern University classes in recent years. In 2015, Dorsey and Wilensky will disseminate InquirySpace to targeted groups for further study. Already, they're teaching workshops to prepare teachers to use the software in the classroom.

"The skills and literacy necessary to do modeling are an increasingly important part of science, and outside of science," Wilensky said. "We want to foster this literacy and make it part of the classroom."

7. Virtual Worlds and Augmented Reality: Increasing ecological understanding

Immersive interfaces, like virtual reality (VR) and augmented reality (AR), are becoming ever more powerful, eliciting investment from the likes of Google, Samsung and Microsoft. As these technologies become commercially available, their use in education is expected to grow.

Chris Dede, professor of learning technologies at Harvard's Graduate School of Education, has studied immersion and learning technologies for twenty years.

"After 20 minutes of watching a good movie, psychologically you're in the context of the story, rather than where your body is sitting," Dede said.

EcoMUVE is a curriculum that was developed at the Harvard Graduate School of Education that uses immersive virtual environments to teach middle school students about ecosystems and causal patterns.

Dede's research tries to determine how immersive interfaces can deepen what happens in classrooms and homes and complement rich, educational real world learning experiences, like internships.

Two decades ago, with funding from NSF, Dede started investigating head-mounted displays and room-sized virtual environments. Some of his early immersive cyberlearning projects included River City (a virtual world in which students learned epidemiology, scientific inquiry, and history) and Science Space (virtual reality physics simulations).

In his most recent projects, Dede and his colleagues have tackled the subject of environmental education, teaching students about stewardship.

"Our projects take a stance. They're not just about understanding," Dede said. "There's a values dimension of it that's important and makes it harder to teach. This is a good challenge for us."

With support from NSF and Qualcomm's Wireless Reach initiative, Dede and his team developed EcoMUVE, a virtual world-based curriculum that teaches middle school students about ecosystems, as well as about scientific inquiry and complex causality.

The project uses a Multi User Virtual Environment (MUVEs) -- a 3D world similar to those found in videogames - to recreate real ecological settings within which students explore and collect information.

The goal of the EcoMUVE project is to help students develop a deeper understanding of ecosystems and causal patterns with a curriculum that uses Multi-User Virtual Environments (MUVEs).

At the beginning of the two to four week EcoMUVE curriculum, the students discover that all of the fish in a virtual pond have died. They then work in teams to determine the complex causal relationships that led to the die-off. The experience immerses the students as ecosystem scientists.

"It's like a jigsaw puzzle," Dede explained. "Each team member does part of the exploring, and they must work together to solve the mystery."

At the end of the investigation, all of the students participate in a mini-scientific conference where they show their findings and the research behind it.

EcoMUVE was released in 2010 and received 1st Place in the Interactive Category of the Immersive Learning Award in 2011 from the Association for Educational Communications and Technology.

Writing in the Journal of Science Education Technologies, Dede and his team concluded that the tools and context provided by the immersive virtual environment helped support student engagement in modeling practices related to ecosystem science.

Whereas EcoMUVE's investigations occurred in a virtual world, the group's follow-up, EcoMOBILE, takes place in real world settings that are heightened by digital tools and augmented reality.

"Multi-user virtual environments are like 'Alice in Wonderland.' You go through the window of the screen and become a digital person in an imaginary world," Dede said. "Augmented reality is like magic eyes that show an overlay on the real world that make you more powerful."

The researchers took the same pond (Black's Nook Pond in Cambridge, Massachusetts) and asked themselves: Why not augment the pond itself and have different stations around the pond where students can go and learn? What emerged was a cyberlearning curriculum centered on location-based augmented reality (AR).

Dede's team created a smart-phone based AR game that students play in the field. Initial studies show significant learning gains using AR versus a regular field trip.

In EcoMOBILE, students' field trip experiences are enhanced by using two forms of mobile technology for science education - mobile broadband devices and environmental probeware.

The virtual worlds and augmented reality are containers in which learning can be developed across a range of subjects, Dede says. The same approach could be applied to economics or local history.

But as appealing as the technology may be, truly successful immersive learning technologies are all about the "3 E's" according to Dede: evocation, engagement and evidence.

Evocation and engagement are fairly easy in VR and AR applications. And increasingly, gathering evidence is too.

Dede's project uses the rich second-by-second information on student behavior gathered by the digital tools to determine how well the project is teaching the students the core concepts. This allows for more accurate individual evaluation.

"We can now collect rich kinds of big data," Dede said. "If we understand how to interpret this data, they can help us with assessment."

With an award from NSF, Dede and his colleagues developed a rubric for virtual performance assessments, working towards a vision of diagnostics happening in real-time that impact the course of the learning.

"The embedded diagnostic assessments -or 'stealth assessments' -- provide rich diagnostic information for students and teachers," Dede said.

The team has even developed animated pedagogical agents (like "Dr. C", a simulated a NASA mars expert) as a way to bring people-based expertise to learning in a scalable way.

The latest direction for Dede's research: using Go-Pro cameras to capture the inter- and intra-personal dimensions of students' activities, and equipping the AR devices with sensors so students can map critical biomes.

"There's a whole bunch of great citizen science that we can see coming out of this," he said.

--

Today, as in 1984, cyberlearning technologies don't work for every student in every situation. The spark of learning is difficult if not impossible to predict.

But beyond instilling computational thinking and imparting long-lived instruction, cyberlearning has another important purpose: to prepare the next-generation for an increasingly technological world and for technologies we can't even yet predict.

The adaptability cultivated by a technological "other" in the classroom is a valuable end in and of itself.

Follow Aaron Dubrow on Twitter: www.twitter.com/aarondubrow

Aucun commentaire:

Enregistrer un commentaire